In various academic and professional settings, such as mathematics lectures or research presentations, it is often necessary to convey mathematical expressions orally. However, reading mathematical expressions aloud without accompanying visuals can significantly hinder comprehension, especially for those who are hearing-impaired or rely on subtitles due to language barriers. For instance, when a presenter reads Euler's Formula, current Automatic Speech Recognition (ASR) models often produce a verbose and error-prone textual description (e.g., e to the power of i x equals cosine of x plus i `side` of x), instead of the concise LaTeX format (i.e., e^{ix} = \cos(x) + i\sin(x) ), which hampers clear understanding and communication. To address this issue, we introduce MathSpeech, a novel pipeline that integrates ASR models with small Language Models (sLMs) to correct errors in mathematical expressions and accurately convert spoken expressions into structured LaTeX representations. Evaluated on a new dataset derived from lecture recordings, MathSpeech demonstrates LaTeX generation capabilities comparable to leading commercial Large Language Models (LLMs), while leveraging fine-tuned small language models of only 120M parameters. Specifically, in terms of CER, BLEU, and ROUGE scores for LaTeX translation, MathSpeech demonstrated significantly superior capabilities compared to GPT-4o. We observed a decrease in CER from 0.390 to 0.298, and higher ROUGE/BLEU scores compared to GPT-4o.

Introduction & Motivation

- Current ASR systems struggle to accurately transcribe mathematical expressions in academic settings, resulting in verbose and error-prone outputs.

- Also, existing ASR systems fail to convert mathematical expressions into LaTeX, making it difficult to accurately understand mathematical formulas.

Approach

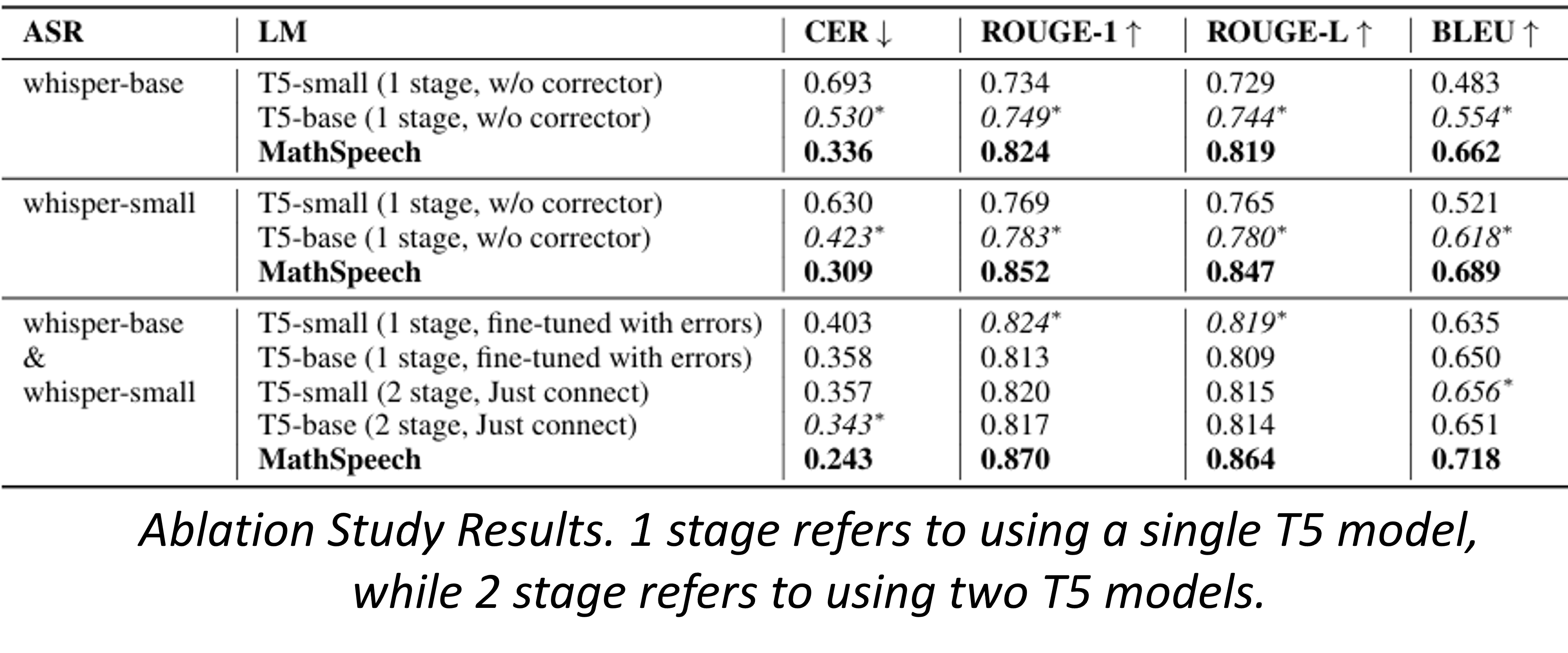

- We connected small language models to the ASR model to perform post-processing on the ASR model's text output.

- A pipeline was created by linking two T5-small models, assigning the roles of Error Corrector and LaTeX Translator to each model.

- Our MathSpeech architecture converts audio input of spoken mathematical formulas into LaTeX code that accurately represents the corresponding formulas.

Outcome

- In terms of CER, BLEU, and ROUGE scores for LaTeX translation, MathSpeech demonstrated significantly superior capabilities compared to GPT-4o.

- The Error Corrector is responsible for correcting errors in the audio transcription text output by the ASR model.

- To train the Error Corrector, we generated audio using TTS and collected errors produced by the ASR model.

- The LaTeX Translator converts spoken English that describes mathematical expressions into LaTeX code.

- To generate the final accurate LaTeX output, we assigned a higher weight to the output of the LaTeX Translator compared to the output of the Error Corrector during end-to-end training.

For model evaluation, we extracted audio from real mathematics lectures on YouTube and manually labeled it to build a benchmark dataset.

@article{HyeonAAAI25,

title={MathSpeech: Leveraging Small LMs for Accurate Conversion in Mathematical Speech-to-Formula},

volume={39},

url={https://ojs.aaai.org/index.php/AAAI/article/view/34595},

DOI={10.1609/aaai.v39i23.34595},

number={23},

journal={Proceedings of the AAAI Conference on Artificial Intelligence},

author={Hyeon, Sieun and Jung, Kyudan and Won, Jaehee and Kim, Nam-Joon and Ryu, Hyun Gon and Lee, Hyuk-Jae and Do, Jaeyoung},

year={2025},

month={Apr.},

pages={24194-24202}

}